Event-Grade Performance Using Locally Deployed AI Models

Large-scale events have a reliability problem. The bigger the crowd, the more the technology struggles to keep up. Real-time interactions demand consistent, split-second performance. Cloud dependency, in that environment, introduces risk that no brand should accept.

This piece covers how locally deployed models resolve that risk — and what that shift means for immersive brand activations at scale.

Cloud AI at High-Traffic Events: The Core Performance Gap

Cloud infrastructure performs well under normal loads. At events with 1,000+ concurrent users, it buckles. Servers throttle. Latency climbs. AR overlays fall out of sync. Projection mapping loses precision. The visual integrity of the experience — which took weeks to build — deteriorates in real time.

Venue bandwidth makes this worse. Stadiums, expos, and large retail activations rarely offer stable fiber connectivity. Mobile hotspots overload quickly. Any cloud-dependent system, in that setting, becomes a liability.

Data privacy adds another layer of exposure. Events collect attendee data. GDPR compliance leaves little room for ambiguity. Transmitting data to external servers during a live activation introduces unnecessary legal and operational risk. On-site processing eliminates that exposure entirely.

Local Deployment: Edge Hardware Running Full Inference On-Site

The operational model shifts when processing runs locally. No external calls. No dependency on venue Wi-Fi. Response times drop to under 100 milliseconds.

At Ink in Caps, this runs on NVIDIA Jetson hardware. Models containerised via Docker load in under 60 seconds and run GPU-accelerated inference without a network connection. A 30-billion-parameter model processes at 50 tokens per second — matching cloud throughput, without the exposure.

Models are trained on event-specific datasets before deployment. Product catalogues, guest interaction flows, real estate visuals — all inform the training set. The system knows the activation before the doors open.

Integration with existing setups stays clean. Interactive walls run YOLOv8 for object detection. Holographic displays use Whisper for local voice transcription. No external API calls. No latency from server round-trips.

Performance Specifications Worth Knowing

Sub-100ms response times across all interaction layers.

Multi-node architecture supports 5,000+ simultaneous users without performance degradation.

All attendee data remains on-device — meeting enterprise privacy standards with zero external transmission.

Offline resilience means the system functions fully without any internet connection. Critical for remote venues or environments with unstable connectivity.

Models fine-tune directly to brand assets — integrating CGI renders, product catalogues, and anamorphic visual libraries into the inference pipeline.

Jetson modules consume approximately 30W, making the entire setup portable and infrastructure-independent.

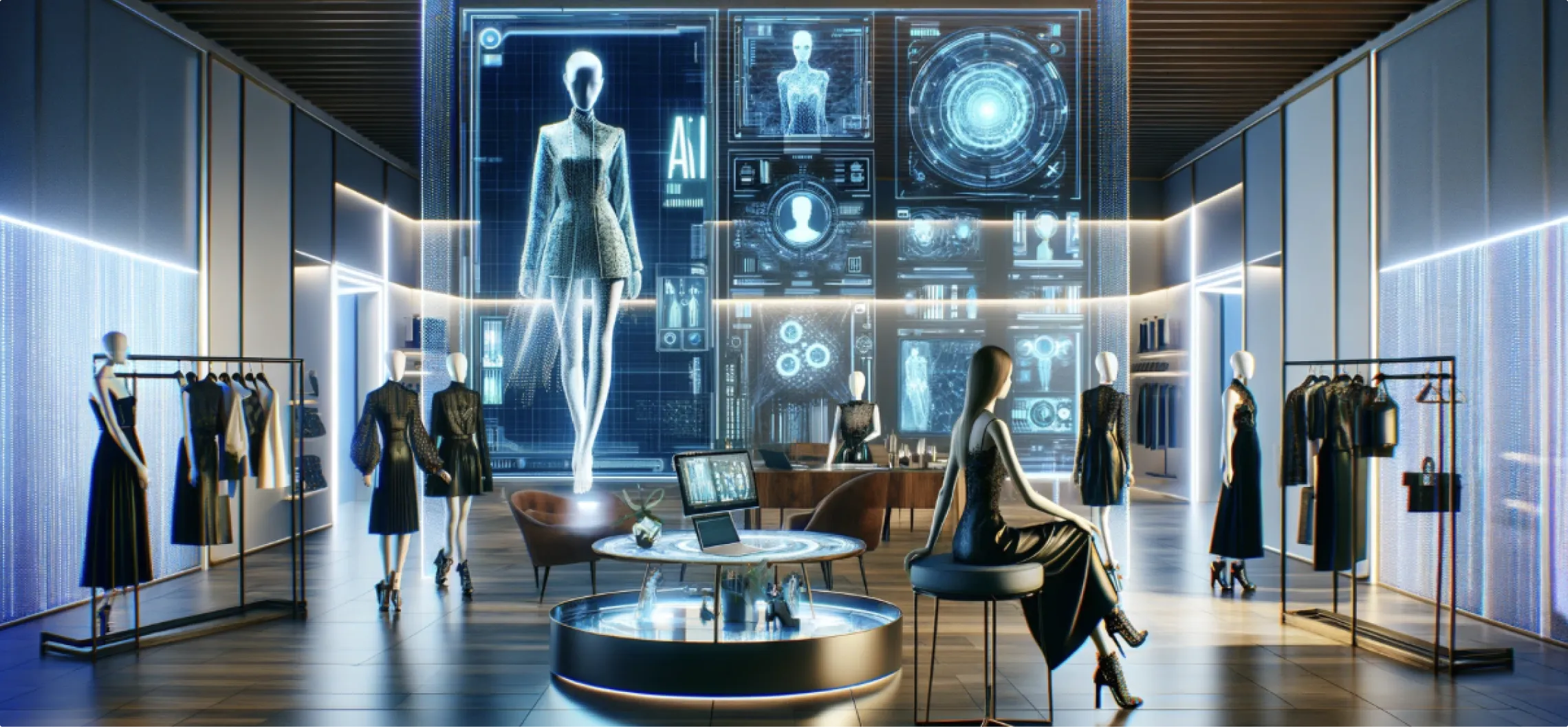

Luxury Product Launch: A 10,000 Sq Ft Local Deployment Activation

The brief required a fully immersive environment spanning 10,000 square feet. Every interaction point had to perform without fail — from the first guest arrival through peak-hour load.

Activation Architecture

A central holographic assistant anchored the space. Guests approached and spoke naturally. Local Whisper transcribed speech on-device. Llama 3 generated contextual responses. Stable Diffusion handled real-time visual synchronisation across curved projection surfaces — all local, all offline-capable.

Object recognition tables powered by local CLIP identified over 200 product SKUs. Attendees placed prototypes on the surface. Screens returned 3D models, product specifications, pricing, and AR try-on views — instantaneously.

Anamorphic illusions extended the visual environment beyond physical boundaries. Engagement time averaged seven minutes per guest — a figure that reflects genuine interest, not passive exposure.

Technical Configuration and Uptime

Models were quantized to 4-bit precision, retaining 95% accuracy while reducing hardware demands. A deployment script automated node synchronisation across the entire environment. The operations team monitored performance through a centralised dashboard.

Uptime across the event: 99.9%. Zero crashes during peak-hour loads. No service interruptions.

Measurable Outcomes

Lead capture increased by 40% compared to previous activations.

Net Promoter Score reached 92.

Brand managers noted specifically that reliability was the differentiating factor. The experience held at peak load without degradation.

In a parallel retail activation using the same architecture, object recognition tables processed 200+ SKUs with real-time AR overlays. Conversion rates rose 28% across the event window.

Industry Direction: Edge Deployment Across Enterprise Events

Gartner projects that 75% of enterprise AI will be edge-deployed by 2027. Events and experiential environments are accelerating that shift — the performance requirements demand it.

Open-source model stacks — Llama, Mistral, Phi-3 — make this operationally viable. LoRA adapters allow fine-tuning in weeks rather than months. Costs have come down to a point where sophisticated local deployment is accessible across budget tiers.

Thermal management in high-occupancy venues, hardware selection for specific environments, and pre-event simulation testing remain areas where execution quality separates reliable deployments from risky ones. These are operational disciplines, not afterthoughts.

The next development layer: multimodal vision-language integration for AR, and local diffusion generating real-time CGI for dynamic projection mapping. The capability already exists. Deployment maturity determines the outcome.

Reliability at Scale Requires the Right Infrastructure

Brand activations at this level carry significant investment and significant reputational stakes. The infrastructure running those environments should match that weight.

Local deployment removes the variables that cause failures in public: network dependency, cloud throttling, data exposure. What remains is a system that performs precisely as designed — regardless of crowd size, venue limitations, or connectivity.

If you're planning an activation, product launch, or Experience Centre and want to understand what this architecture looks like for your specific environment, Ink in Caps builds and deploys these systems — tailored to your brand assets, tested under load, and built to hold. Reach out to schedule a technical walkthrough.

Contact Us Now:

.CNhas5IL_ZqBJiz.webp)