Human-in-the-Loop Controls for AI Assistants at Live Activations

Real-time activations leave no room for error. Every second of lag, every misread query, every misaligned response — visitors notice. And so do your stakeholders.

Brands investing in immersive experience centers, product launches, and large-scale installations expect their technology to perform under pressure. But even the most sophisticated AI assistant will encounter conditions it wasn't trained for — regional dialects, mixed-language inputs, niche product queries, crowd-driven data spikes.

That gap between AI capability and live event complexity requires a deliberate operational layer. Human-in-the-loop controls fill that gap precisely.

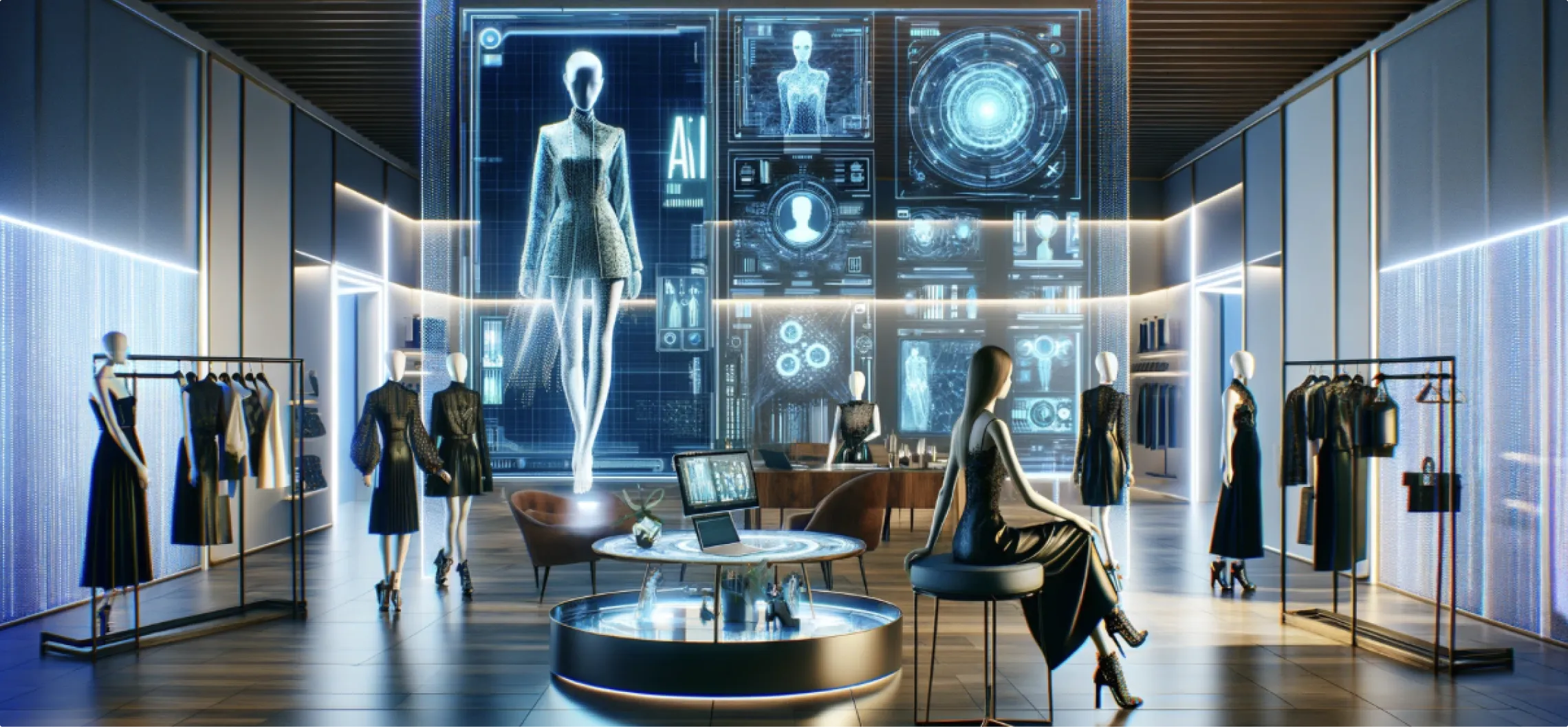

AI Assistants in High-Traffic Immersive Environments

Modern brand activations run on layered technology. Holographic displays, object recognition tables, projection mapping, AR overlays — these systems work in concert. AI assistants anchor the visitor experience, responding to queries in real time and narrating product stories without a script.

The scale and pace of live events stress-test these systems constantly.

500 simultaneous interactions. Visitors speaking in regional dialects. Queries that shift mid-conversation. Standard AI deployments are not built for that variability. The result: response delays, off-brand outputs, sentiment drops, and frustrated visitors who disengage before the conversion moment.

The cost isn't just a poor experience. It's measurable — in lead captures, dwell time, and brand recall.

Operational Challenges in Live Deployment

The failure points in live AI deployments follow a consistent pattern.

Accent and dialect mismatch reduces transcription accuracy significantly. In markets like Mumbai, where Hindi-English code-switching within a single sentence, standard language models lose context. The AI responds to what it understood, not what the visitor meant.

Multi-input environments push systems into data loops. When multiple visitors interact simultaneously with adjacent stations, processing queues back up. Response times stretch. The interaction stalls.

Off-script queries are inevitable. Visitors ask about pricing bundles, regional availability, technical configurations, or after-sales support — topics the AI may not handle with sufficient precision. Without a fallback, the system either produces a generic response or falls silent.

These aren't edge cases. At high-footfall activations, they occur constantly.

Human-in-the-Loop Controls: Operational Architecture

The approach centers on real-time operator oversight embedded directly into the activation workflow.

Operators monitor live transcripts and sentiment feeds from a centralized dashboard. Every visitor interaction generates a data stream — query content, AI confidence scores, sentiment indicators, response latency. When the system detects an anomaly, it flags it automatically.

Escalation triggers at sub-85% confidence thresholds. When the AI's confidence score drops below that threshold, the system initiates a handoff protocol. The AI pauses. A trained operator receives the query. The operator responds via an avatar overlay or audio channel — seamlessly, within under two seconds.

The visitor experiences no interruption. The brand messaging stays intact.

Post-handoff, the AI resumes the session. Operator inputs are logged to the CRM. Each intervention feeds a refinement cycle that improves model performance over subsequent sessions.

Core Features of the Control Framework

Real-time monitoring dashboards display live transcripts, confidence scores, sentiment shifts, and visitor flow across all interactive stations simultaneously.

Automated escalation triggers activate when confidence drops below defined thresholds — no manual flagging required from the operator.

Audio handoff under two seconds routes complex or sensitive queries to human specialists without visible disruption to the visitor journey.

Multi-language and dialect support extends coverage to regional inputs, including code-switched language patterns common in Indian metro markets.

CRM integration logs every operator intervention with full context — query, response, confidence score, visitor profile data — enabling post-event model refinement.

Scalability to 500+ concurrent users ensures performance holds regardless of footfall peaks.

Edge device deployment keeps latency low even in venues with constrained network infrastructure.

Mumbai Product Launch: Control System in Practice

A leading consumer electronics brand activated a 360-degree immersive zone in Mumbai. The installation featured holographic product demonstrations, object recognition tables, and AI-powered assistants across three stations. Daily footfall exceeded 1,000 visitors.

Initial AI trials identified three consistent failure points.

Visitors queried pricing bundles in mixed Hindi-English. The AI misread code-switched inputs and returned generic responses. When visitors asked about custom device configurations, the system lacked sufficient product-specific context and produced inaccurate outputs. During peak footfall windows, response latency increased to the point where visitors visibly disengaged.

The deployment integrated human-in-the-loop controls across all three stations.

Operators — trained brand specialists with full product knowledge — monitored live dashboards. Object recognition tables fed visitor behavior data into the oversight system. When a visitor scanned a device and moved beyond standard feature queries, the escalation protocol activated. The specialist responded via an avatar overlay. Average handoff time: 1.8 seconds.

Outcomes over the activation period:

Visitor engagement time increased 42%

Lead captures rose 35%

AI error rate reduced to 3%

Post-event visitor satisfaction scored 92%

The brand attributed measurable conversion improvements directly to the quality of booth interactions. The data from operator interventions refined the AI model for subsequent sessions, compressing the error rate further across the activation run.

Applicability Across Formats

The control framework extends beyond product launches.

Retail experience centers use identical architecture for interactive walls and AI-guided product discovery. Visitor queries in physical retail generate the same variability as live events — the oversight layer performs identically.

Pop-up activations deploy on edge devices with minimal infrastructure requirements. The system scales to match footfall without proportional increases in technical overhead.

Enterprise installations — full-scale Experience Centers with multiple interactive zones — benefit from centralized dashboards that manage oversight across all zones simultaneously.

The underlying principle remains consistent: AI handles volume and speed, human oversight handles complexity and precision. The combination produces outcomes neither achieves independently.

Metrics That Matter to Decision-Makers

Dwell time, lead capture rates, conversion from activation to sales pipeline, visitor satisfaction scores — these are the figures that appear in post-event reviews. Human-in-the-loop controls directly influence every one of them.

Response quality determines engagement duration. Visitors stay longer when the interaction delivers accurate, contextually relevant information. The 42% increase in engagement time from the Mumbai activation reflects that directly.

Error reduction compounds over time. Each operator intervention refines the model. The activation improves through its own run, not just in retrospect.

Sentiment data informs campaign strategy. Every flagged query and operator response generates structured data. Post-activation analytics surface patterns — which queries escalated most frequently, which product features generated the most interest, where messaging misaligned with visitor expectations.

That data has value beyond the activation itself.

For brands running large-scale activations, the conversation around AI performance rarely focuses on the right variable. The question isn't whether the AI is capable — it's whether the deployment has the operational structure to handle what the AI can't. If your next activation involves complex visitor interactions at scale, that's the conversation worth having with your technology partners. Ink in Caps works with brand teams at the planning stage to build that structure into the activation design from the start — reach out to schedule a working session before your next brief closes.

Contact Us Now:

.CNhas5IL_ZqBJiz.webp)