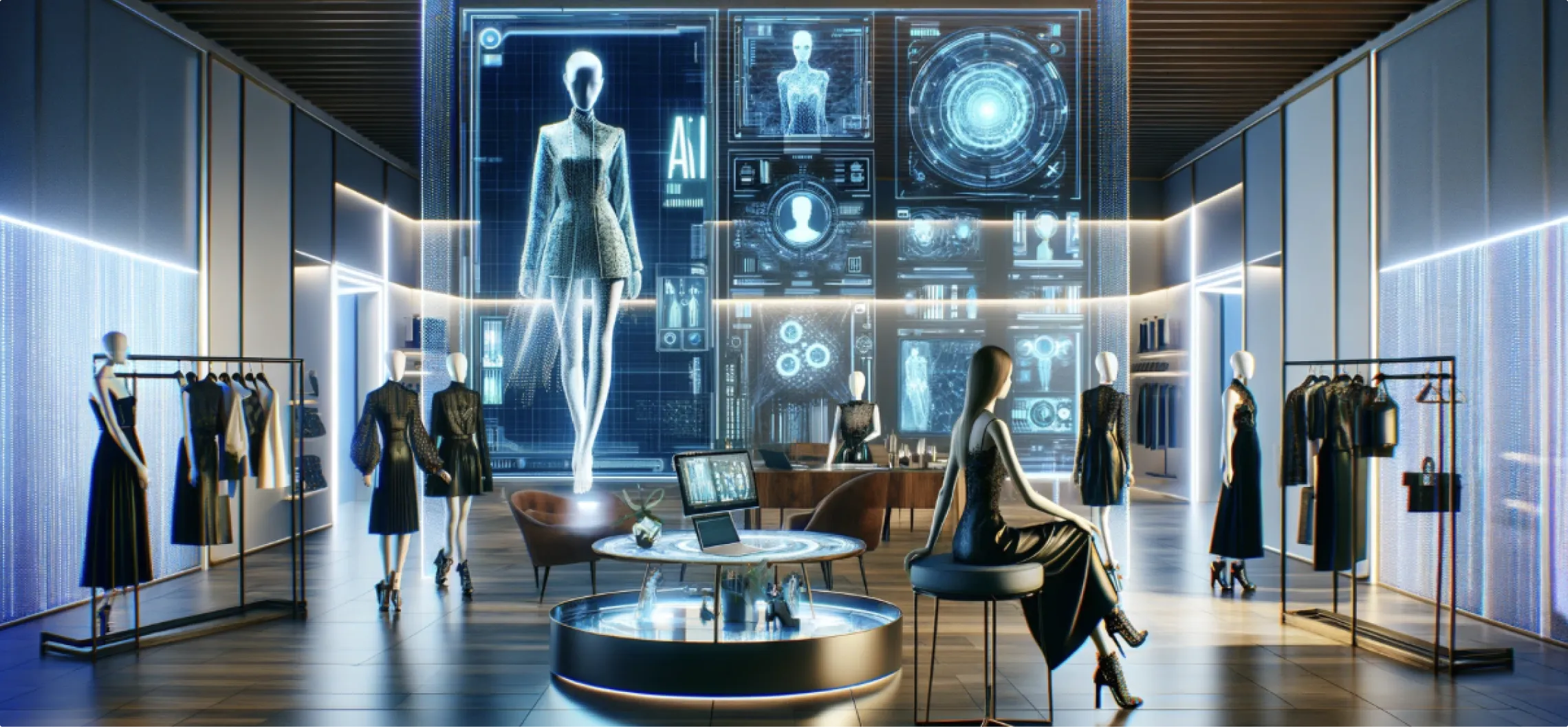

On-Site AI Inference for Real-Time Immersive Visuals

Live environments don't forgive latency. When an anamorphic facade flickers during peak foot traffic, or a projection mapping installation stutters mid-activation, the brand moment doesn't recover. The audience moves on. The opportunity closes.

This isn't a rendering problem. It's an infrastructure decision made too early in the process — one that most experiential teams only notice when it's already too late

Real-Time Rendering Demands Local Processing Power

Cloud-dependent rendering pipelines carry an inherent vulnerability: network dependency. In controlled environments, that dependency stays invisible. In live deployments — stadium activations, flagship store launches, outdoor installations — it surfaces as lag.

A 2-second delay on an interactive wall isn't a technical glitch. It's a conversion killer.

Industry data from 2025 supports this. Event companies report up to 30% drop-off in interaction rates when immersive displays perform inconsistently. For retail environments built around object recognition tables or position-triggered anamorphic content, even sub-500ms delays break the experience entirely.

The expectation from audiences has shifted. Immersive content at scale now competes with the precision of consumer-grade devices. Anything less reads as failure.

Edge Inference: Processing at the Source

On-site inference moves computation to the hardware closest to the experience. There's no roundtrip to a remote server. The device processes, renders, and responds — locally.

At Ink in Caps, deployments integrate NVIDIA Jetson and Qualcomm edge hardware directly into installations. Models execute on embedded GPUs. Visuals update at 60 FPS, regardless of crowd density or network availability.

The performance outcomes are measurable:

80% reduction in bandwidth requirements across on-site deployments

Offline operation capability for remote or bandwidth-constrained venues

Consistent frame rates under variable load conditions

60% lower operational costs compared to equivalent cloud infrastructure

These aren't theoretical benchmarks. They come from live deployments across retail pilots and large-scale event activations — environments where performance has direct commercial consequences.

Retail Activation: Projection Mapping at Flagship Scale

A leading retail brand engaged Ink in Caps for a flagship store launch in a high-footfall urban location. The brief centred on a storefront anamorphic display — one that responded to pedestrian movement in real time and transitioned seamlessly into AR product trial triggers.

The problem with the initial architecture: cloud rendering introduced 2-second lags during peak hours. Foot traffic conversion sat at 15%. The display was visible. The experience wasn't landing.

The rebuild centred on a fully on-site inference pipeline.

Custom edge servers processed depth sensor feeds and camera inputs locally. Projection mapping adapted to pedestrian movement with no perceptible delay. The anamorphic content warped around the building's physical geometry in real time. AR product trial triggers activated on scan — with zero buffering.

The results across a 30-day, 24/7 deployment:

Dwell time increased by 45%

Conversion climbed from 15% to 28%

Zero operational downtime across the full activation period

Architecture extended cleanly to satellite store rollouts

The conversion shift wasn't driven by creative alone. It came from removing the friction that was silently degrading every interaction.

Projection Mapping Volume, AR Integration, and the 50ms Standard

Projection mapping deployments grew 40% through 2025, with no indication the trajectory flattens. The applications have expanded well beyond static surfaces — curved architecture, moving objects, multi-surface environments with real-time calibration demands.

AR/VR integrations within live environments have established a practical threshold: under 50ms inference latency for interactions that feel natural. Above that threshold, the brain registers the disconnect. The experience reads as synthetic rather than responsive.

Edge inference, purpose-built for specific hardware configurations, consistently operates within this window. Cloud alternatives, by architecture, cannot reliably match it in variable network conditions.

For enterprise teams running Experience Centers — environments combining interactive walls, holographic tables, object recognition systems, and AI-powered assistants — on-site processing also eliminates the compliance exposure that comes with external data transmission. All processing stays local. No user data leaves the installation.

Immersive Infrastructure Built for Decision-Makers

The business case for on-site inference consolidates quickly when framed against what's at stake in high-investment activations.

Lower cost per deployment. Edge setups reduce operational overhead by 60% over cloud-dependent equivalents across comparable deployments.

Higher activation reliability. Installations that run offline don't expose brands to network failure during peak moments.

Scalable architecture. The same pipeline that powers a flagship activation extends to satellite locations without infrastructure overhauls.

Data security by design. Local processing removes the attack surface and compliance risk inherent in transmitting audience data externally.

For brand managers evaluating vendors on technical reliability rather than capability claims, the distinction matters. On-site inference isn't a feature — it's an architectural commitment to consistent performance under real-world conditions.

3D Visualization and Experience Center Deployment

Beyond event activations, the same edge infrastructure powers 3D architectural walkthroughs in permanent Experience Centers. Users navigate VR environments without buffering. Holographic tables trigger contextual overlays from physical objects. Sales teams run live product demonstrations in fully rendered virtual contexts.

The performance characteristics that define great live activation experiences apply equally here — latency-free, scalable, and resilient to the variability of real-world attendance patterns.

If your next activation, product launch, or Experience Center build demands this level of infrastructure thinking, Ink in Caps' technical team works through the deployment architecture with you before a single line of code executes. Reach out to schedule a scoped consultation.

Contact Us Now:

.CNhas5IL_ZqBJiz.webp)